Weekly Seminar in AI & Machine Learning

Sponsored by the HPI Research Center in Machine Learning and Data Science at UC Irvine

Jan. 13 DBH 4011 1 pm |

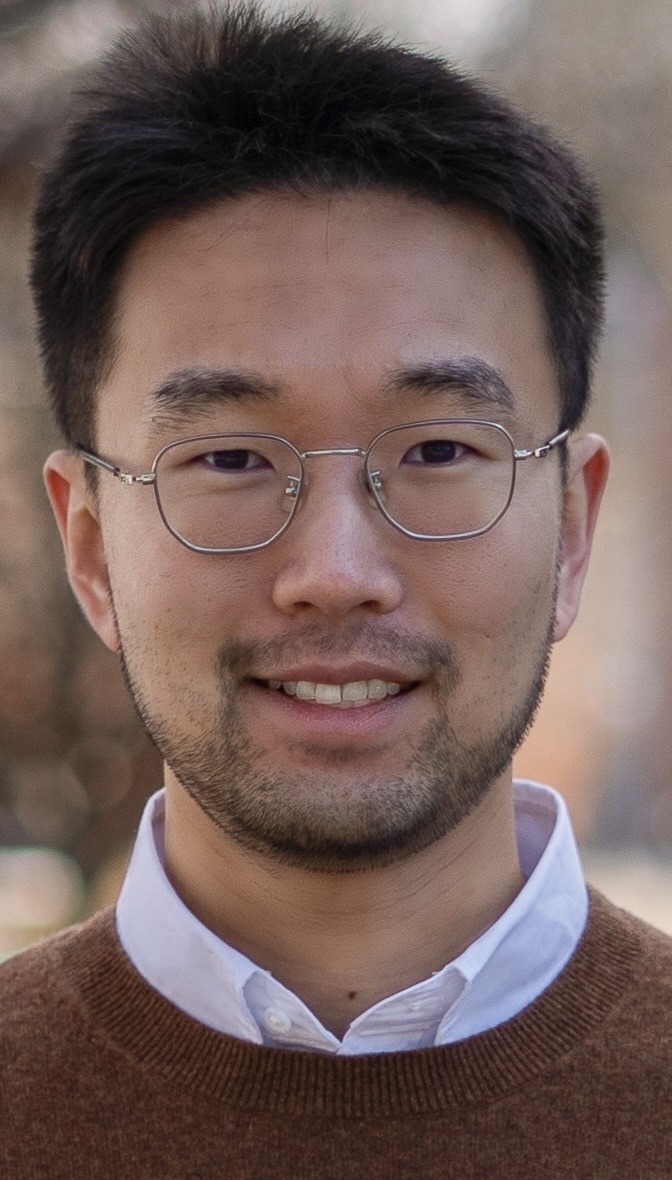

Scientific Machine Learning (SML) is an emerging interdisciplinary field with wide-ranging applications in domains such as public health, climate science, and drug discovery. The primary goal of SML is to develop data-driven surrogate models that can learn spatiotemporal dynamics or predict key system properties, thereby accelerating time-intensive simulations and reducing the need for real-world experiments. To make SML approaches truly reliable for domain experts, Uncertainty Quantification (UQ) plays a critical role in enabling risk assessment and informed decision-making. In this presentation, I will first introduce our recent advancements in UQ for spatiotemporal and multi-fidelity surrogate modeling with Bayesian deep learning, focusing on applications in accelerating computational epidemiology simulations. Following this, I will demonstrate how quantified uncertainties can be leveraged to design sample-efficient algorithms for adaptive experimental design, with a focus on Bayesian Active Learning and Bayesian Optimization.

Bio: Dongxia (Allen) Wu is a Ph.D. student in the Department of Computer Science and Engineering at UC San Diego, advised by Rose Yu and Yian Ma. His research focuses on Bayesian Deep Learning, Sequential Decision Making, Scientific Machine Learning, and Spatiotemporal Modeling, with applications in public health, climate science, and drug design. His work has been published in ICML, KDD, AISTATS, and PNAS. He developed DeepGLEAM for COVID-19 incident death forecasting, which achieved the highest coverage ranking in the CDC Forecasting Hub. He is also the recipient of UCSD HDSI Ph.D. Fellowship. |

Jan. 20 |

No Seminar (Martin Luther King, Jr. Day)

|

Jan. 27 DBH 4011 1 pm |

Satish Kumar Thittamaranahalli Research Associate Professor Department of Computer Science, University of Southern California FastMap was first introduced in the Data Mining community for generating Euclidean embeddings of complex objects. In this talk, I will first generalize FastMap to generate Euclidean embeddings of graphs in near-linear time: The pairwise Euclidean distances approximate a desired graph-based distance function on the vertices. I will then apply the graph version of FastMap to efficiently solve various graph-theoretic problems of significant interest in AI: including shortest-path computations, facility location, top-K centrality computations, and community detection and block modeling. I will also present a novel learning framework, called FastMapSVM, by combining FastMap and Support Vector Machines. I will then apply FastMapSVM to predict the satisfiability of Constraint Satisfaction Problems and to classify seismograms in Earthquake Science.

Bio: Prof. Satish Kumar Thittamaranahalli (T. K. Satish Kumar) leads the Collaboratory for Algorithmic Techniques and Artificial Intelligence at the Information Sciences Institute of the University of Southern California. He is a Research Associate Professor in USC’s Department of Computer Science, Department of Physics and Astronomy, and Department of Industrial and Systems Engineering. He has published extensively on numerous topics spanning diverse areas such as Constraint Reasoning, Planning and Scheduling, Probabilistic Reasoning, Machine Learning and Data Informatics, Robotics, Combinatorial Optimization, Approximation and Randomization, Heuristic Search, Model-Based Reasoning, Computational Physics, Knowledge Representation, and Spatiotemporal Reasoning. He has served on the program committees of many international conferences and is a winner of three Best Paper Awards. Prof. Kumar received his PhD in Computer Science from Stanford University in March 2005. In the past, he has also been a Visiting Student at the NASA Ames Research Center, a Postdoctoral Research Scholar at the University of California, Berkeley, a Research Scientist at the Institute for Human and Machine Cognition, a Visiting Assistant Professor at the University of West Florida, and a Senior Research and Development Scientist at Mission Critical Technologies. |

Feb. 3 DBH 4011 1 pm |

Francesco Immorlano Postdoctoral Researcher Department of Computer Science, University of California, Irvine Earth system models (ESMs) are the main tools currently used to project global mean temperature rise according to several future greenhouse gases emissions scenarios. Accurate and precise climate projections are required for climate adaptation and mitigation, but these models still exhibit great uncertainties that are a major roadblock for policy makers. Several approaches have been developed to reduce the spread of climate projections, yet those methods cannot capture the non-linear complexity inherent in the climate system. Using a Transfer Learning approach, Machine Learning can leverage and combine the knowledge gained from ESMs simulations and historical observations to more accurately project global surface air temperature fields in the 21st century. This helps enhance the representation of future projections and their associated spatial patterns which are critical to climate sensitivity.

Bio: Francesco Immorlano is a Postdoctoral Researcher at the University of California, Irvine with a Ph.D. in Engineering of Complex Systems from the University of Salento. Since May 2020 he has been collaborating with the CMCC Foundation and was a visiting researcher at Columbia University in Spring 2022. His main research work is focused on deep learning and generative models with a specific application to the climate science domain. |

Feb. 10 DBH 4011 1 pm |

Language model agents are tackling challenging tasks from embodied planning to web navigation to programming. These models are a powerful artifact of natural language processing research that are being applied to interactive environments traditionally reserved for reinforcement learning. However, many environments are not natively expressed in language, resulting in poor alignment between language representations and true states and actions. Additionally, while language models are generally capable, their biases from pretraining can be unaligned with specific environment dynamics. In this talk, I cover our research into rectifying these issues through methods such as: (1) mapping high-level language model plans to low-level actions, (2) optimizing language model agent inputs using reinforcement learning, and (3) in-context policy improvement for continual task adaptation.

Bio: Kolby Nottingham is a 5th-year CS PhD student at the University of California Irvine co-advised by Roy Fox and Sameer Singh. His research applies algorithms and insights from reinforcement learning to improve the potential of agentic language model applications. He has diverse industry experience from internships at companies such as Nvidia, Unity, and Allen AI. Kolby is also excited by prospective applications of his work in the video game industry and has experience doing research for game studios such as Latitude and Riot Games. |

Feb. 17 |

No Seminar (Presidents’ Day)

|

Feb. 24 DBH 4011 11 am |

This talk examines methods for enhancing language model capabilities through post-training. While large language models have led to major breakthroughs in natural language processing, significant challenges persist due to the inherent ambiguity and underspecification in language. I will present a spectrum ranging from underspecification (preference modeling) to full specification (precise instruction following with verifiable constraints), and propose modeling approaches to increase language models’ contextual robustness and precision. I will demonstrate how models can become more precise instruction followers through synthetic data, preference tuning, and reinforcement learning from verifiable rewards. On the preference data side, I will illustrate patterns of divergence in annotations, showing how disagreements stem from underspecification, and propose alternatives to the Bradley-Terry reward model for capturing pluralistic preferences. The talk concludes by connecting underspecification and reinforcement learning through a novel method: reinforced clarification question generation, which helps models obtain missing contextual information that is consequential for making predictions. Throughout the presentation, I will synthesize these research threads to demonstrate how post-training approaches can improve model steerability and contextual understanding when facing underspecification.

Bio: Valentina Pyatkin is a postdoctoral researcher at the Allen Institute for AI and the University of Washington, advised by Prof. Yejin Choi. She is additionally supported by an Eric and Wendy Schmidt Postdoctoral Award. She obtained her PhD in Computer Science from the NLP lab at Bar Ilan University. Her work has been awarded an ACL Outstanding Paper Award and the ACL Best Theme Paper Award. During her doctoral studies, she conducted research internships at Google and the Allen Institute for AI, where she received the AI2 Outstanding Intern of the Year Award. She holds an MSc from the University of Edinburgh and a BA from the University of Zurich. Valentina’s research focuses on post-training and the adaptation of language models, specifically for making them better semantic and pragmatic reasoners. |

Feb. 25 DBH 4011 11 am |

Generative models, such as ChatGPT and DALL-E, are used by millions of people daily for tasks ranging from programming and content creation to resume filtering. These models often create the impression of being “intelligent,” which can incentivize careless use in critical applications. While generative models are empowering, they appear to be black boxes, and their misuse can result in harmful or unlawful outcomes. In this talk, I will present algorithms and tools for dissecting and analyzing generative models using holistic, causal, and data-centric approaches. By applying these methods to state-of-the-art models, we can foster trust in these technologies by uncovering human-interpretable concepts that underpin their behavior, scrutinizing their extensive training data, and evaluating their learning processes. Finally, I will reflect on how generative models have transformed the field of AI and discuss the challenges that remain in ensuring their responsible development and use. Bio: Yanai Elazar is a Postdoctoral Researcher at AI2 and the University of Washington. Prior to that, he completed his PhD in Computer Science at Bar-Ilan University. He is interested in the science of generative models, for which he develops algorithms and tools for understanding what makes models work, how, and why. |

Feb. 27 DBH 4011 11 am |

Current success in artificial intelligence relies heavily on internet-scale data with unified representations. However, such large-scale homogeneous data is not readily available for spatial computing applications involving 3D geometry. In this talk, I will present approaches to building spatial intelligence systems with limited 3D data by combining existing mathematical models into existing machine learning pipelines. I will share my work applying these approaches to develop data-driven methods that can synthesize and analyze 3D geometry. Finally, I will discuss future opportunities and challenges of data-efficient spatial intelligence.

Bio: Guandao Yang is a postdoctoral scholar at Stanford, where he works with Professor Leonidas Guibas and Professor Gordon Wetzstein. His research lies in the intersection of computer graphics, computer vision, and machine learning. He completed his Ph.D. at Cornell in 2023, advised by Professor Serge Belongie and Professor Bharath Hariharan. During his Ph.D., Guandao had experience collaborating with various industry research labs, including NVIDIA, Intel, and Google. He received his bachelor’s degree from Cornell University in Ithaca, majoring in Mathematics and Computer Science. His research received funding support from Magic Leap, Intel, Google, Nvidia, Samsung, and Army Research Lab. |

March 11 DBH 4011 11 am |

Modern deep learning has achieved remarkable results, but the design of training methodologies largely relies on guess-and-check approaches. Thorough empirical studies of recent massive language models (LMs) is prohibitively expensive, underscoring the need for theoretical insights, but classical ML theory struggles to describe modern training paradigms. I present a novel approach to developing prescriptive theoretical results that can directly translate to improved training methodologies for LMs. My research has yielded actionable improvements in model training across the LM development pipeline — for example, my theory motivates the design of MeZO, a fine-tuning algorithm that reduces memory usage by up to 12x and halves the number of GPU-hours required. Throughout the talk, to underscore the prescriptiveness of my theoretical insights, I will demonstrate the success of these theory-motivated algorithms on novel empirical settings published after the theory.

Bio: Sadhika Malladi is a final-year PhD student in Computer Science at Princeton University advised by Sanjeev Arora. Her research advances deep learning theory to capture modern-day training settings, yielding practical training improvements and meaningful insights into model behavior. She has co-organized multiple workshops, including Mathematical and Empirical Understanding of Foundation Models at ICLR 2024 and Mathematics for Modern Machine Learning (M3L) at NeurIPS 2024. She was named a 2025 Siebel Scholar. |

March 13 DBH 4011 11 am |

Language models (LMs) can effectively internalize knowledge from vast amounts of pre-training data, enabling them to achieve remarkable performance on exam-style benchmarks. Expanding their ability to compile, synthesize, and reason over large volumes of information on the fly will further unlock transformative applications, ranging from AI literature assistants to generative search engines. In this talk, I will present my research on advancing LMs for processing information at scale. (1) I will present my evaluation framework for LM-based information-seeking systems, emphasizing the importance of providing citations for verifying the model-generated answers. Our evaluation highlights shortcomings in LMs’ abilities to reliably process long-form texts (e.g., dozens of webpages), which I address by developing state-of-the-art long-context LMs that outperform leading industry efforts while using a small fraction of the computational budget. (2) I will then introduce my foundational work on using contrastive learning to produce high-performing text embeddings, which form the cornerstone of effective and scalable search. (3) In addition to building systems that can process large-scale information, I will discuss my contributions to creating efficient pre-training and customization methods for LMs, which enable scalable deployment of LM-powered applications across diverse settings. Finally, I will share my vision for the next generation of autonomous information processing systems and outline the foundational challenges that must be addressed to realize this vision.

Bio: Tianyu Gao is a fifth-year PhD student in the Department of Computer Science at Princeton University, advised by Danqi Chen. His research focuses on developing principled methods for training and adapting language models, many of which have been widely adopted across academia and industry. Driven by transformative applications, such as using language models as information-seeking tools, his work also advances robust evaluation and fosters a deeper understanding to guide the future development of language models. He led the first workshop on long-context foundation models at ICML 2024. He won an outstanding paper award at ACL 2022 and received an IBM PhD Fellowship in 2023. Before Princeton, he received his BEng from Tsinghua University in 2020. |

March 17 DBH 4011 11 am |

Generative visual models like Stable Diffusion and Sora generate photorealistic images and videos that are nearly indistinguishable from real ones to a naive observer. However, their grasp of the physical world remains an open question: Do they understand 3D geometry, light, and object interactions, or are they mere “pixel parrots” of their training data? Through systematic probing, I will demonstrate that these models surprisingly learn fundamental scene properties—intrinsic images such as surface normals, depth, albedo, and shading (à la Barrow & Tenenbaum, 1978)—without explicit supervision, which enables applications like image relighting. But I will also show that this knowledge is insufficient. Careful analysis reveals unexpected failures: inconsistent shadows, multiple vanishing points, and scenes that defy basic physics. All these findings suggest these models excel at local texture synthesis but struggle with global reasoning: a crucial gap between imitation and true understanding. I will then conclude by outlining a path toward generative world models that emulate global and counterfactual reasoning, causality, and physics.

Bio: Anand Bhattad is a Research Assistant Professor at the Toyota Technological Institute at Chicago. He earned his PhD from the University of Illinois Urbana-Champaign in 2024 under the mentorship of David Forsyth. His research interests lie at the intersection of computer vision and computer graphics, with a current focus on understanding the knowledge encoded in generative models. Anand has received Outstanding Reviewer honors at ICCV 2023 and CVPR 2021, and his CVPR 2022 paper was nominated for a Best Paper Award. He actively contributes to the research community by leading workshops at CVPR and ECCV, including “Scholars and Big Models: How Can Academics Adapt?” (CVPR 2023), “CV 20/20: A Retrospective Vision” (CVPR 2024), “Knowledge in Generative Models” (ECCV 2024), and “How to Stand Out in the Crowd?” (CVPR 2025). |

March 18 DBH 4011 11 am |

In this talk, I will discuss how to build natural language processing (NLP) systems that solve real-world problems requiring complex reasoning. I will address three key challenges. First, because real-world reasoning tasks often differ from the data used in pretraining, I will introduce WildChat, a dataset of reasoning questions collected from users, and demonstrate how training on it enhances language models’ reasoning abilities. Second, because supervision is often limited in practice, I will describe my approach to enabling models to perform multi-hop reasoning without direct supervision. Finally, since many real-world applications demand reasoning beyond natural language, I will introduce a language agent capable of acting on external feedback. I will conclude by outlining a vision for training the next generation of AI reasoning models.

Bio: Wenting Zhao is a Ph.D. candidate in Computer Science at Cornell University, advised by Claire Cardie and Sasha Rush. Her research focuses on the intersection of natural language processing and reasoning, where she develops techniques to effectively reason over real-world scenarios. Her work has been featured in The Washington Post and TechCrunch. She has co-organized several tutorials and workshops, including the VerifAI: AI Verification in the Wild workshop at ICLR 2025 and the Complex Reasoning in Natural Language tutorial at ACL 2023. In 2024, she was recognized as a rising star in Generative AI and was named Intern of the Year at the Allen Institute for AI in 2023. |

March 19 DBH 4011 11 am |

Scientific computing, which aims to accurately simulate complex physical phenomena, often requires substantial computational resources. By viewing data as continuous functions, we leverage the smoothness structures of function spaces to enable efficient large-scale simulations. We introduce the neural operator, a machine learning framework designed to approximate solution operators in infinite-dimensional spaces, achieving scalable physical simulations across diverse resolutions and geometries. Beginning with the Fourier Neural Operator, we explore recent advancements including scale-consistent learning techniques and adaptive mesh methods. We demonstrate the real-world impact of our framework through applications in weather prediction, carbon capture, and plasma dynamics, achieving speedups of several orders of magnitude.

Bio: Zongyi Li is a final-year PhD candidate in Computing + Mathematical Sciences at Caltech, working with Prof. Anima Anandkumar and Prof. Andrew Stuart. His research focuses on developing neural operator methods for accelerating scientific simulations. He has completed three summer internships at Nvidia (2022-2024). Zongyi received his undergraduate degrees in Computer Science and Mathematics from Washington University in St. Louis (2015-2019). His research has been supported by the Kortschak Scholarship, PIMCO Fellowship, Amazon AI4Science Fellowship, and Nvidia Fellowship. |

April 1 DBH 4011 11 am |

Large language models (LLMs) power a rapidly-growing and increasingly impactful suite of AI technologies. However, due to their scale and complexity, we lack a fundamental scientific understanding of much of LLMs’ behavior, even when they are open source. The “black-box” nature of LMs not only complicates model debugging and evaluation, but also limits trust and usability. In this talk, I will describe how my research on interpretability (i.e., understanding models’ inner workings) has answered key scientific questions about how models operate. I will then demonstrate how deeper insights into LLMs’ behavior enable both 1) targeted performance improvements and 2) the production of transparent, trustworthy explanations for human users.

Bio: Sarah Wiegreffe is a postdoctoral researcher at the Allen Institute for AI (Ai2) and the Allen School of Computer Science and Engineering at the University of Washington. She has worked on the explainability and interpretability of neural networks for NLP since 2017, with a focus on understanding how language models make predictions in order to make them more transparent to human users. She has been honored as a 3-time Rising Star in EECS, Machine Learning, and Generative AI. She received her PhD in computer science from Georgia Tech in 2022, during which time she interned at Google and Ai2 and won the Ai2 outstanding intern award. She frequently serves on conference program committees, receiving outstanding area chair awards at ACL 2023 and EMNLP 2024. |

April 4 DBH 4011 2 pm |

To advance AI toward true artificial general intelligence, it is crucial to incorporate a wider range of sensory inputs, including physical interaction, spatial navigation, and social dynamics. However, achieving the successes of self-supervised Large Language Models (LLMs) across other modalities in our physical and digital environments remains a significant challenge. In this talk, I will discuss how self-supervised learning methods can be harnessed to advance multimodal models beyond the need for human supervision. Firstly, I will highlight a series of research efforts on self-supervised visual scene understanding that leverage the capabilities of self-supervised models to “segment anything” without the need for 1.1 billion labeled segmentation masks, unlike the popular supervised approach, the Segment Anything Model (SAM). Secondly, I will demonstrate how generative and understanding models can work together synergistically, allowing them to complement and enhance each other. Lastly, I will explore the increasingly important techniques for learning from unlabeled or imperfect data within the context of data-centric representation learning. All these research topics are unified by the same core idea: advancing multimodal models beyond human supervision.

Bio: XuDong Wang is a final-year Ph.D. student in the Berkeley AI Research (BAIR) lab at UC Berkeley, advised by Prof. Trevor Darrell, and a research scientist on the Llama Research team at GenAI, Meta. He was previously a researcher at Google DeepMind (GDM) and the International Computer Science Institute (ICSI), and a research intern at Meta’s Fundamental AI Research (FAIR) labs and Generative AI (GenAI) Research team. His research focuses on self-supervised learning, multimodal models, and machine learning, with an emphasis on developing foundational AI systems that go beyond the constraints of human supervision. By advancing self-supervised learning techniques for multimodal models—minimizing reliance on human-annotated data—he aims to build intelligent systems capable of understanding and interacting with their environment in ways that mirror, and potentially surpass, the complexity, adaptability, and richness of human intelligence. He is a recipient of the William Oldham Fellowship at UC Berkeley, awarded for outstanding graduate research in EECS. |

April 21 DBH 4011 1 pm |

Felix Draxler Postdoctoral Researcher Department of Computer Science, University of California, Irvine Generative models have achieved remarkable quality and success in a variety of machine learning applications, promising to become the standard paradigm for regression. However, each predominant approach comes with drawbacks in terms of inference speed, sample quality, training stability, or flexibility. In this talk, I will propose Free-Form Flows, a new generative model that offers fast data generation at high quality and flexibility. I will guide you through the fundamentals and showcase a variety of scientific applications.

Bio: Felix Draxler is a Postdoctoral Researcher at the University of California, Irvine. His research focuses on the fundamentals of generative models, with the goal of making them not only accurate but also fast and versatile. He received his PhD in 2024 from Heidelberg University, Germany. |

April 28 DBH 4011 1 pm |

Since emerging in 2020, neural radiance fields (NeRFs) have marked a transformative breakthrough in representing photorealistic 3D scenes. In the years that followed, numerous variants have evolved, enhancing performance, enabling the capture of dynamic changes over time, and tackling challenges in large-scale environments. Among these, NVIDIA’s Instant-NGP stood out, earning recognition as one of TIME Magazine’s Best Inventions of 2022. Radiance fields now facilitate advanced 3D scene understanding, leveraging large language models (LLMs) and diffusion models to enable sophisticated scene editing and manipulation. Their applications span robotics, where they support planning, navigation, and manipulation. In autonomous driving, they serve as immersive simulation systems or can be used as digital twins for video compression integrated in edge computing architectures. This lecture explores the evolution, capabilities, and practical impact of radiance fields in these cutting-edge domains.

Bio: Matúš Dopiriak is a 3rd-year PhD candidate at the Technical University in Košice, Department of Computers and Informatics, advised by Professor Ing. Juraj Gazda, PhD. His research explores the integration of radiance fields in autonomous mobility within edge computing architectures. Additionally, he studies the application of Large Vision-Language Models (LVLMs) to address edge-case scenarios in traffic through simulations that generate and manage these uncommon and hazardous conditions. |

May 5 ISEB 1010 4 pm |

Reinforcement learning is increasingly used to train robots for tasks where safety is critical, such as autonomous surgery and navigation. However, when combined with deep neural networks, these systems can become unpredictable and difficult to trust in contexts where even a single error is often unacceptable. This talk explores two complementary paths toward safer reinforcement learning: making agents more reliable through constrained training, and adding formal guarantees through techniques such as verification and shielding. In the second part of the talk, we will look at the growing role of world modeling in robotics and how this, together with the rise of large foundation models, opens up new challenges for ensuring safety in complex, real-world environments.

Bio: Davide Corsi is a postdoctoral researcher at the University of California, Irvine, where he works in the Intelligent Dynamics Lab led by Prof. Roy Fox. His research lies at the intersection of deep reinforcement learning and robotics, with a strong focus on ensuring that intelligent agents behave safely and reliably when deployed in real-world, safety-critical environments. He earned his PhD in Computer Science from the University of Verona under the supervision of Prof. Alessandro Farinelli, with a dissertation on safe deep reinforcement learning that explored both constrained policy optimization and formal verification techniques. During his PhD, he spent time as a visiting researcher at the Hebrew University of Jerusalem with Prof. Guy Katz, where he investigated the integration of neural network verification into learning-based control systems. Davide’s recent work also explores generative world models and causal reasoning to enable autonomous agents to predict long-term outcomes and safely adapt to new situations. His research has been published at leading venues such as AAAI, IJCAI, ICLR, IROS, and RLC, where he was recently recognized with an Outstanding Paper Award for his work on autonomous underwater navigation. |

August 7 DBH 4011 1 pm |

The fusion of artificial intelligence and medicine is driving a healthcare transformation, enabling personalized treatment tailored to each patient’s unique needs. By leveraging advances in machine learning, we can transform challenging and diverse patient data into actionable insights that have the potential to reshape clinical practice. In this presentation, I will cover both the theoretical foundations and practical applications of machine learning in healthcare. I will highlight innovative approaches designed to enhance diagnostics, improve patient care, and make medical decision-making more accessible and reliable. I will touch on five fundamental, interconnected areas: multimodal data integration, unsupervised structure detection, longitudinal data analysis, transparent model development, and development of decision support tools. Through real-world medical examples, I aim to illuminate the collaborative future of machine learning and healthcare, where cutting-edge computational techniques synergize with medical expertise to pave the way for decision-support systems truly integrated into medical practice. Bio: Julia Vogt is an assistant professor in Computer Science at ETH Zurich, where she leads the Medical Data Science Group. The focus of her research is on linking computer science with medicine, with the ultimate aim of personalized patient treatment. She has studied mathematics both in Konstanz and in Sydney and earned her Ph.D. in computer science at the University of Basel. She was a postdoctoral research fellow at the Memorial Sloan-Kettering Cancer Center in NYC and with the Bioinformatics and Information Mining group at the University of Konstanz. In 2018, she joined the University of Basel as an assistant professor. In May 2019, she and her lab moved to Zurich where she joined the Computer Science Department of ETH Zurich. |

August 28 DBH 4011 1 pm |

Daniel Neider Professor of Verification and Formal Guarantees of Machine Learning Department of Computer Science, TU Dortmund Artificial Intelligence has become ubiquitous in modern life. This “Cambrian explosion” of intelligent systems has been made possible by extraordinary advances in machine learning, especially in training deep neural networks and their ingenious architectures. However, like traditional hardware and software, neural networks often have defects, which are notoriously difficult to detect and correct. Consequently, deploying them in safety-critical settings remains a substantial challenge. Motivated by the success of formal methods in establishing the reliability of safety-critical hardware and software, numerous formal verification techniques for deep neural networks have emerged recently. These techniques aim to ensure the safety of AI systems by detecting potential bugs, including those that may arise from unseen data not present in the training or test datasets, and proving the absence of critical errors. As a guide through the vibrant and rapidly evolving field of neural network verification, this talk will give an overview of the fundamentals and core concepts of the field, discuss prototypical examples of various existing verification approaches, and showcase how generative AI can improve verification results.

Bio: Daniel Neider is Professor of Verification and Formal Guarantees of Machine Learning at the Technical University of Dortmund and an affiliated researcher at the “Trustworthy Data Science and Security” research center within the University Alliance Ruhr. His research focuses on developing formal methods to ensure the reliability of artificial intelligence and machine learning models. After earning his doctorate from RWTH Aachen University in 2014, he held postdoctoral positions at the University of Illinois at Urbana-Champaign and the University of California, Los Angeles, contributing to the NFS-funded “ExCAPE – Expeditions in Computer-Augmented Program Engineering” project. He later led the “Logic and Learning” research group at the Max Planck Institute for Software Systems from 2017 to 2022 while teaching at TU Kaiserslautern. Before joining TU Dortmund in November 2022, he served as Professor of Security and Explainability of Learning Systems at Carl von Ossietzky University Oldenburg. |