Oct. 10 DBH 4011 1 pm |

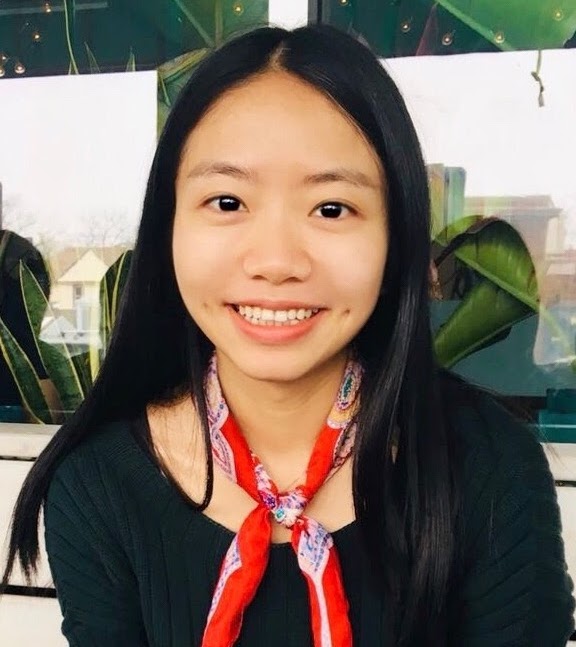

With the burgeoning use of machine learning models in an assortment of applications, there is a need to rapidly and reliably deploy models in a variety of environments. These trustworthy machine learning models must satisfy certain criteria, namely the ability to: (i) adapt and generalize to previously unseen worlds although trained on data that only represent a subset of the world, (ii) allow for non-iid data, (iii) be resilient to (adversarial) perturbations, and (iv) conform to social norms and make ethical decisions. In this talk, towards trustworthy and generally applicable intelligent systems, I will cover some reinforcement learning algorithms that achieve fast adaptation by guaranteed knowledge transfer, principled methods that measure the vulnerability and improve the robustness of reinforcement learning agents, and ethical models that make fair decisions under distribution shifts.

Bio: Furong Huang is an Assistant Professor of the Department of Computer Science at University of Maryland. She works on statistical and trustworthy machine learning, reinforcement learning, graph neural networks, deep learning theory and federated learning with specialization in domain adaptation, algorithmic robustness and fairness. Furong is a recipient of the NSF CRII Award, the MLconf Industry Impact Research Award, the Adobe Faculty Research Award, and three JP Morgan Faculty Research Awards. She is a Finalist of AI in Research – AI researcher of the year for Women in AI Awards North America 2022. She received her Ph.D. in electrical engineering and computer science from UC Irvine in 2016, after which she completed postdoctoral positions at Microsoft Research NYC. |

Oct. 17 DBH 4011 1 pm |

Bodhi Majumder PhD Student, Department of Computer Science and Engineering University of California, San Diego The use of artificial intelligence in knowledge-seeking applications (e.g., for recommendations and explanations) has shown remarkable effectiveness. However, the increasing demand for more interactions, accessibility and user-friendliness in these systems requires the underlying components (dialog models, LLMs) to be adequately grounded in the up-to-date real-world context. However, in reality, even powerful generative models often lack commonsense, explanations, and subjectivity — a long-standing goal of artificial general intelligence. In this talk, I will partly address these problems in three parts and hint at future possibilities and social impacts. Mainly, I will discuss: 1) methods to effectively inject up-to-date knowledge in an existing dialog model without any additional training, 2) the role of background knowledge in generating faithful natural language explanations, and 3) a conversational framework to address subjectivity—balancing task performance and bias mitigation for fair interpretable predictions.

Bio: Bodhisattwa Prasad Majumder is a final-year PhD student at CSE, UC San Diego, advised by Prof. Julian McAuley. His research goal is to build interactive machines capable of producing knowledge grounded explanations. He previously interned at Allen Institute of AI, Google AI, Microsoft Research, FAIR (Meta AI) and collaborated with U of Oxford, U of British Columbia, and Alan Turing Institute. He is a recipient of the UCSD CSE Doctoral Award for Research (2022), Adobe Research Fellowship (2022), UCSD Friends Fellowship (2022), and Qualcomm Innovation Fellowship (2020). In 2019, Bodhi led UCSD in the finals of Amazon Alexa Prize. He also co-authored a best-selling NLP book with O’Reilly Media that is being adopted in universities internationally. Website: http://www.majumderb.com/. |

Oct. 24 DBH 4011 1 pm |

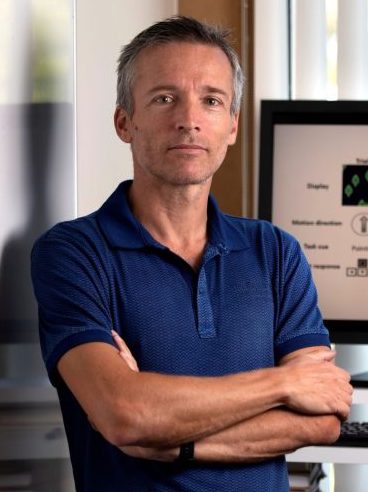

Artificial intelligence (AI) and machine learning models are being increasingly deployed in real-world applications. In many of these applications, there is strong motivation to develop hybrid systems in which humans and AI algorithms can work together, leveraging their complementary strengths and weaknesses. In the first part of the presentation, I will discuss results from a Bayesian framework where we statistically combine the predictions from humans and machines while taking into account the unique ways human and algorithmic confidence is expressed. The framework allows us to investigate the factors that influence complementarity, where a hybrid combination of human and machine predictions leads to better performance than combinations of human or machine predictions alone. In the second part of the presentation, I will discuss some recent work on AI-assisted decision making where individuals are presented with recommended predictions from classifiers. Using a cognitive modeling approach, we can estimate the AI reliance policy used by individual participants. The results show that AI advice is more readily adopted if the individual is in a low confidence state, receives high-confidence advice from the AI and when the AI is generally more accurate. In the final part of the presentation, I will discuss the question of “machine theory of mind” and “theory of machine”, how humans and machines can efficiently form mental models of each other. I will show some recent results on theory-of-mind experiments where the goal is for individuals and machine algorithms to predict the performance of other individuals in image classification tasks. The results show performance gaps where human individuals outperform algorithms in mindreading tasks. I will discuss several research directions designed to close the gap.

Bio: Mark Steyvers is a Professor of Cognitive Science at UC Irvine and Chancellor’s Fellow. He has a joint appointment with the Computer Science department and is affiliated with the Center for Machine Learning and Intelligent Systems. His publications span work in cognitive science as well as machine learning and has been funded by NSF, NIH, IARPA, NAVY, and AFOSR. He received his PhD from Indiana University and was a Postdoctoral Fellow at Stanford University. He is currently serving as Associate Editor of Computational Brain and Behavior and Consulting Editor for Psychological Review and has previously served as the President of the Society of Mathematical Psychology, Associate Editor for Psychonomic Bulletin & Review and the Journal of Mathematical Psychology. In addition, he has served as a consultant for a variety of companies such as eBay, Yahoo, Netflix, Merriam Webster, Rubicon and Gimbal on machine learning problems. Dr. Steyvers received New Investigator Awards from the American Psychological Association as well as the Society of Experimental Psychologists. He also received an award from the Future of Privacy Forum and Alfred P. Sloan Foundation for his collaborative work with Lumosity. |

Oct. 31 DBH 4011 1 pm |

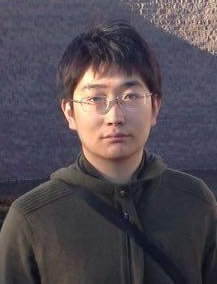

In reasoning about sequential events it is natural to pose probabilistic queries such as “when will event A occur next” or “what is the probability of A occurring before B”, with applications in areas such as user modeling, medicine, and finance. However, with machine learning shifting towards neural autoregressive models such as RNNs and transformers, probabilistic querying has been largely restricted to simple cases such as next-event prediction. This is in part due to the fact that future querying involves marginalization over large path spaces, which is not straightforward to do efficiently in such models. In this talk, we will describe a novel representation of querying for these discrete sequential models, as well as discuss various approximation and search techniques that can be utilized to help estimate these probabilistic queries. Lastly, we will briefly touch on ongoing work that has extended these techniques into sequential models for continuous time events.

Bio: Alex Boyd is a Statistics PhD candidate at UC Irvine, co-advised by Padhraic Smyth and Stephan Mandt. His work focuses on improving probabilistic methods, primarily for deep sequential models. He was selected in 2020 as a National Science Foundation Graduate Fellow. |

Nov. 7 DBH 4011 1 pm |

Yanning Shen Assistant Professor of Electrical Engineering and Computer Science University of California, Irvine We live in an era of data deluge, where pervasive media collect massive amounts of data, often in a streaming fashion. Learning from these dynamic and large volumes of data is hence expected to bring significant science and engineering advances along with consequent improvements in quality of life. However, with the blessings come big challenges. The sheer volume of data makes it impossible to run analytics in batch form. Large-scale datasets are noisy, incomplete, and prone to outliers. As many sources continuously generate data in real-time, it is often impossible to store all of it. Thus, analytics must often be performed in real-time, without a chance to revisit past entries. In response to these challenges, this talk will first introduce an online scalable function approximation scheme that is suitable for various machine learning tasks. The novel approach adaptively learns and tracks the sought nonlinear function ‘on the fly’ with quantifiable performance guarantees, even in adversarial environments with unknown dynamics. Building on this robust and scalable function approximation framework, a scalable online learning approach with graph feedback will be outlined next for online learning with possibly related models. The effectiveness of the novel algorithms will be showcased in several real-world datasets.

Bio: Yanning Shen is an assistant professor with the EECS department at the University of California, Irvine. She received her Ph.D. degree from the University of Minnesota (UMN) in 2019. She was a finalist for the Best Student Paper Award at the 2017 IEEE International Workshop on Computational Advances in Multi-Sensor Adaptive Processing, and the 2017 Asilomar Conference on Signals, Systems, and Computers. She was selected as a Rising Star in EECS by Stanford University in 2017. She received the Microsoft Academic Grant Award for AI Research in 2021, the Google Research Scholar Award in 2022, and the Hellman Fellowship in 2022. Her research interests span the areas of machine learning, network science, data science, and signal processing. |

Nov. 14 DBH 4011 1 pm |

Information extraction (IE) is the process of automatically inducing structures of concepts and relations described in natural language text. It is the fundamental task to assess the machine’s ability for natural language understanding, as well as the essential step for acquiring structural knowledge representation that is integral to any knowledge-driven AI systems. Despite the importance, obtaining direct supervision for IE tasks is always very difficult, as it requires expert annotators to read through long documents and identify complex structures. Therefore, a robust and accountable IE model has to be achievable with minimal and imperfect supervision. Towards this mission, this talk covers recent advances of machine learning and inference technologies that (i) grant robustness against noise and perturbation, (ii) prevent systematic errors caused by spurious correlations, and (iii) provide indirect supervision for label-efficient and logically consistent IE.

Bio: Muhao Chen is an Assistant Research Professor of Computer Science at USC, and the director of the USC Language Understanding and Knowledge Acquisition (LUKA) Lab. His research focuses on robust and minimally supervised machine learning for natural language understanding, structured data processing, and knowledge acquisition from unstructured data. His work has been recognized with an NSF CRII Award, faculty research awards from Cisco and Amazon, an ACM SIGBio Best Student Paper Award and a best paper nomination at CoNLL. Dr. Chen obtained his Ph.D. degree from UCLA Department of Computer Science in 2019, and was a postdoctoral researcher at UPenn prior to joining USC. |

Nov. 21 DBH 4011 1 pm |

Peter Orbanz Professor of Machine Learning Gatsby Computational Neuroscience Unit, University College London Consider a large random structure — a random graph, a stochastic process on the line, a random field on the grid — and a function that depends only on a small part of the structure. Now use a family of transformations to ‘move’ the domain of the function over the structure, collect each function value, and average. Under suitable conditions, the law of large numbers generalizes to such averages; that is one of the deep insights of modern ergodic theory. My own recent work with Morgane Austern (Harvard) shows that central limit theorems and other higher-order properties also hold. Loosely speaking, if the i.i.d. assumption of classical statistics is substituted by suitable properties formulated in terms of groups, the fundamental theorems of inference still hold.

Bio: Peter Orbanz is a Professor of Machine Learning in the Gatsby Computational Neuroscience Unit at University College London. He studies large systems of dependent variables in machine learning and inference problems. That involves symmetry and group invariance properties, such as exchangeability and stationarity, random graphs and random structures, hierarchies of latent variables, and the intersection of ergodic theory and statistical physics with statistics and machine learning. In the past, Peter was a PhD student of Joachim M. Buhmann at ETH Zurich, a postdoc with Zoubin Ghahramani at the University of Cambridge, and Assistant and Associate Professor in the Department of Statistics at Columbia University. |

Nov. 28 |

No Seminar (NeurIPS Conference)

|

Center for Machine Learning and Intelligent Systems

Bren School of Information and Computer Science

University of California, Irvine