Oct. 10 DBH 4011 1 pm |

With the burgeoning use of machine learning models in an assortment of applications, there is a need to rapidly and reliably deploy models in a variety of environments. These trustworthy machine learning models must satisfy certain criteria, namely the ability to: (i) adapt and generalize to previously unseen worlds although trained on data that only represent a subset of the world, (ii) allow for non-iid data, (iii) be resilient to (adversarial) perturbations, and (iv) conform to social norms and make ethical decisions. In this talk, towards trustworthy and generally applicable intelligent systems, I will cover some reinforcement learning algorithms that achieve fast adaptation by guaranteed knowledge transfer, principled methods that measure the vulnerability and improve the robustness of reinforcement learning agents, and ethical models that make fair decisions under distribution shifts.

Bio: Furong Huang is an Assistant Professor of the Department of Computer Science at University of Maryland. She works on statistical and trustworthy machine learning, reinforcement learning, graph neural networks, deep learning theory and federated learning with specialization in domain adaptation, algorithmic robustness and fairness. Furong is a recipient of the NSF CRII Award, the MLconf Industry Impact Research Award, the Adobe Faculty Research Award, and three JP Morgan Faculty Research Awards. She is a Finalist of AI in Research – AI researcher of the year for Women in AI Awards North America 2022. She received her Ph.D. in electrical engineering and computer science from UC Irvine in 2016, after which she completed postdoctoral positions at Microsoft Research NYC. |

Oct. 17 DBH 4011 1 pm |

Bodhi Majumder PhD Student, Department of Computer Science and Engineering University of California, San Diego The use of artificial intelligence in knowledge-seeking applications (e.g., for recommendations and explanations) has shown remarkable effectiveness. However, the increasing demand for more interactions, accessibility and user-friendliness in these systems requires the underlying components (dialog models, LLMs) to be adequately grounded in the up-to-date real-world context. However, in reality, even powerful generative models often lack commonsense, explanations, and subjectivity — a long-standing goal of artificial general intelligence. In this talk, I will partly address these problems in three parts and hint at future possibilities and social impacts. Mainly, I will discuss: 1) methods to effectively inject up-to-date knowledge in an existing dialog model without any additional training, 2) the role of background knowledge in generating faithful natural language explanations, and 3) a conversational framework to address subjectivity—balancing task performance and bias mitigation for fair interpretable predictions.

Bio: Bodhisattwa Prasad Majumder is a final-year PhD student at CSE, UC San Diego, advised by Prof. Julian McAuley. His research goal is to build interactive machines capable of producing knowledge grounded explanations. He previously interned at Allen Institute of AI, Google AI, Microsoft Research, FAIR (Meta AI) and collaborated with U of Oxford, U of British Columbia, and Alan Turing Institute. He is a recipient of the UCSD CSE Doctoral Award for Research (2022), Adobe Research Fellowship (2022), UCSD Friends Fellowship (2022), and Qualcomm Innovation Fellowship (2020). In 2019, Bodhi led UCSD in the finals of Amazon Alexa Prize. He also co-authored a best-selling NLP book with O’Reilly Media that is being adopted in universities internationally. Website: http://www.majumderb.com/. |

Oct. 24 DBH 4011 1 pm |

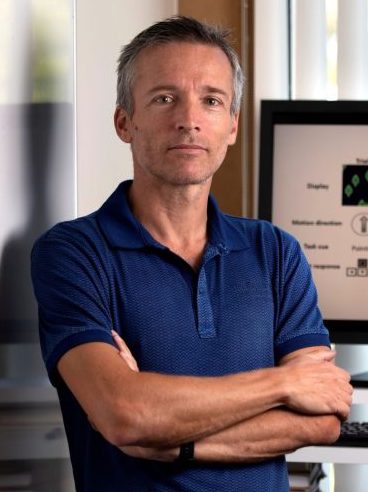

Artificial intelligence (AI) and machine learning models are being increasingly deployed in real-world applications. In many of these applications, there is strong motivation to develop hybrid systems in which humans and AI algorithms can work together, leveraging their complementary strengths and weaknesses. In the first part of the presentation, I will discuss results from a Bayesian framework where we statistically combine the predictions from humans and machines while taking into account the unique ways human and algorithmic confidence is expressed. The framework allows us to investigate the factors that influence complementarity, where a hybrid combination of human and machine predictions leads to better performance than combinations of human or machine predictions alone. In the second part of the presentation, I will discuss some recent work on AI-assisted decision making where individuals are presented with recommended predictions from classifiers. Using a cognitive modeling approach, we can estimate the AI reliance policy used by individual participants. The results show that AI advice is more readily adopted if the individual is in a low confidence state, receives high-confidence advice from the AI and when the AI is generally more accurate. In the final part of the presentation, I will discuss the question of “machine theory of mind” and “theory of machine”, how humans and machines can efficiently form mental models of each other. I will show some recent results on theory-of-mind experiments where the goal is for individuals and machine algorithms to predict the performance of other individuals in image classification tasks. The results show performance gaps where human individuals outperform algorithms in mindreading tasks. I will discuss several research directions designed to close the gap.

Bio: Mark Steyvers is a Professor of Cognitive Science at UC Irvine and Chancellor’s Fellow. He has a joint appointment with the Computer Science department and is affiliated with the Center for Machine Learning and Intelligent Systems. His publications span work in cognitive science as well as machine learning and has been funded by NSF, NIH, IARPA, NAVY, and AFOSR. He received his PhD from Indiana University and was a Postdoctoral Fellow at Stanford University. He is currently serving as Associate Editor of Computational Brain and Behavior and Consulting Editor for Psychological Review and has previously served as the President of the Society of Mathematical Psychology, Associate Editor for Psychonomic Bulletin & Review and the Journal of Mathematical Psychology. In addition, he has served as a consultant for a variety of companies such as eBay, Yahoo, Netflix, Merriam Webster, Rubicon and Gimbal on machine learning problems. Dr. Steyvers received New Investigator Awards from the American Psychological Association as well as the Society of Experimental Psychologists. He also received an award from the Future of Privacy Forum and Alfred P. Sloan Foundation for his collaborative work with Lumosity. |

Oct. 31 DBH 4011 1 pm |

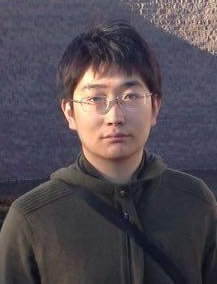

In reasoning about sequential events it is natural to pose probabilistic queries such as “when will event A occur next” or “what is the probability of A occurring before B”, with applications in areas such as user modeling, medicine, and finance. However, with machine learning shifting towards neural autoregressive models such as RNNs and transformers, probabilistic querying has been largely restricted to simple cases such as next-event prediction. This is in part due to the fact that future querying involves marginalization over large path spaces, which is not straightforward to do efficiently in such models. In this talk, we will describe a novel representation of querying for these discrete sequential models, as well as discuss various approximation and search techniques that can be utilized to help estimate these probabilistic queries. Lastly, we will briefly touch on ongoing work that has extended these techniques into sequential models for continuous time events.

Bio: Alex Boyd is a Statistics PhD candidate at UC Irvine, co-advised by Padhraic Smyth and Stephan Mandt. His work focuses on improving probabilistic methods, primarily for deep sequential models. He was selected in 2020 as a National Science Foundation Graduate Fellow. |

Nov. 7 DBH 4011 1 pm |

Yanning Shen Assistant Professor of Electrical Engineering and Computer Science University of California, Irvine We live in an era of data deluge, where pervasive media collect massive amounts of data, often in a streaming fashion. Learning from these dynamic and large volumes of data is hence expected to bring significant science and engineering advances along with consequent improvements in quality of life. However, with the blessings come big challenges. The sheer volume of data makes it impossible to run analytics in batch form. Large-scale datasets are noisy, incomplete, and prone to outliers. As many sources continuously generate data in real-time, it is often impossible to store all of it. Thus, analytics must often be performed in real-time, without a chance to revisit past entries. In response to these challenges, this talk will first introduce an online scalable function approximation scheme that is suitable for various machine learning tasks. The novel approach adaptively learns and tracks the sought nonlinear function ‘on the fly’ with quantifiable performance guarantees, even in adversarial environments with unknown dynamics. Building on this robust and scalable function approximation framework, a scalable online learning approach with graph feedback will be outlined next for online learning with possibly related models. The effectiveness of the novel algorithms will be showcased in several real-world datasets.

Bio: Yanning Shen is an assistant professor with the EECS department at the University of California, Irvine. She received her Ph.D. degree from the University of Minnesota (UMN) in 2019. She was a finalist for the Best Student Paper Award at the 2017 IEEE International Workshop on Computational Advances in Multi-Sensor Adaptive Processing, and the 2017 Asilomar Conference on Signals, Systems, and Computers. She was selected as a Rising Star in EECS by Stanford University in 2017. She received the Microsoft Academic Grant Award for AI Research in 2021, the Google Research Scholar Award in 2022, and the Hellman Fellowship in 2022. Her research interests span the areas of machine learning, network science, data science, and signal processing. |

Nov. 14 DBH 4011 1 pm |

Information extraction (IE) is the process of automatically inducing structures of concepts and relations described in natural language text. It is the fundamental task to assess the machine’s ability for natural language understanding, as well as the essential step for acquiring structural knowledge representation that is integral to any knowledge-driven AI systems. Despite the importance, obtaining direct supervision for IE tasks is always very difficult, as it requires expert annotators to read through long documents and identify complex structures. Therefore, a robust and accountable IE model has to be achievable with minimal and imperfect supervision. Towards this mission, this talk covers recent advances of machine learning and inference technologies that (i) grant robustness against noise and perturbation, (ii) prevent systematic errors caused by spurious correlations, and (iii) provide indirect supervision for label-efficient and logically consistent IE.

Bio: Muhao Chen is an Assistant Research Professor of Computer Science at USC, and the director of the USC Language Understanding and Knowledge Acquisition (LUKA) Lab. His research focuses on robust and minimally supervised machine learning for natural language understanding, structured data processing, and knowledge acquisition from unstructured data. His work has been recognized with an NSF CRII Award, faculty research awards from Cisco and Amazon, an ACM SIGBio Best Student Paper Award and a best paper nomination at CoNLL. Dr. Chen obtained his Ph.D. degree from UCLA Department of Computer Science in 2019, and was a postdoctoral researcher at UPenn prior to joining USC. |

Nov. 21 DBH 4011 1 pm |

Peter Orbanz Professor of Machine Learning Gatsby Computational Neuroscience Unit, University College London Consider a large random structure — a random graph, a stochastic process on the line, a random field on the grid — and a function that depends only on a small part of the structure. Now use a family of transformations to ‘move’ the domain of the function over the structure, collect each function value, and average. Under suitable conditions, the law of large numbers generalizes to such averages; that is one of the deep insights of modern ergodic theory. My own recent work with Morgane Austern (Harvard) shows that central limit theorems and other higher-order properties also hold. Loosely speaking, if the i.i.d. assumption of classical statistics is substituted by suitable properties formulated in terms of groups, the fundamental theorems of inference still hold.

Bio: Peter Orbanz is a Professor of Machine Learning in the Gatsby Computational Neuroscience Unit at University College London. He studies large systems of dependent variables in machine learning and inference problems. That involves symmetry and group invariance properties, such as exchangeability and stationarity, random graphs and random structures, hierarchies of latent variables, and the intersection of ergodic theory and statistical physics with statistics and machine learning. In the past, Peter was a PhD student of Joachim M. Buhmann at ETH Zurich, a postdoc with Zoubin Ghahramani at the University of Cambridge, and Assistant and Associate Professor in the Department of Statistics at Columbia University. |

Nov. 28 |

No Seminar (NeurIPS Conference)

|

By: Erik Sudderth

Jyothi Named a Rising Star by N2Women

StandardCongratulations to Sangeetha Abdu Jyothi for being named a 2022 Rising Star in Computer Networking and Communications by N2Women. Prof. Jyothi explores innovative applications of machine learning to systems and networking problems, including award-winning recent work characterizing the resilience of the internet to solar superstorms.

CML Researchers win NAACL Paper Award

StandardCongratulations to CML PhD student Robert Logan, and his advisor Prof. Sameer Singh, who received a Best New Task Paper Award at the 2022 Annual Conference of the North American Chapter of the Association for Computational Linguistics (NAACL). Their method, FRUIT: Faithfully Reflecting Updated Information in Text, uses language models to automatically update articles (like those on Wikipedia) when new evidence is obtained. This work is motivated not only by a desire to assist the volunteers who maintain Wikipedia, but by the ways it pushes the boundaries of the NLP field.

Spring 2022

StandardLive Stream for all Spring 2022 CML Seminars

Maurizio Filippone Associate Professor, EURECOM and Ba-Hien Tran PhD Student, EURECOM YouTube Stream: https://youtu.be/oZAuh686ipw The Bayesian treatment of neural networks dictates that a prior distribution is specified over their weight and bias parameters. This poses a challenge because modern neural networks are characterized by a huge number of parameters and non-linearities. The choice of these priors has an unpredictable effect on the distribution of the functional output which could represent a hugely limiting aspect of Bayesian deep learning models. Differently, Gaussian processes offer a rigorous non-parametric framework to define prior distributions over the space of functions. In this talk, we aim to introduce a novel and robust framework to impose such functional priors on modern neural networks for supervised learning tasks through minimizing the Wasserstein distance between samples of stochastic processes. In addition, we extend this framework to carry out model selection for Bayesian autoencoders for unsupervised learning tasks. We provide extensive experimental evidence that coupling these priors with scalable Markov chain Monte Carlo sampling offers systematically large performance improvements over alternative choices of priors and state-of-the-art approximate Bayesian deep learning approaches.

Bio: Maurizio Filippone received a Master’s degree in Physics and a Ph.D. in Computer Science from the University of Genova, Italy, in 2004 and 2008, respectively. In 2007, he was a Research Scholar with George Mason University, Fairfax, VA. From 2008 to 2011, he was a Research Associate with the University of Sheffield, U.K. (2008-2009), with the University of Glasgow, U.K. (2010), and with University College London, U.K (2011). From 2011 to 2015 he was a Lecturer at the University of Glasgow, U.K, and he is currently AXA Chair of Computational Statistics and Associate Professor at EURECOM, Sophia Antipolis, France. His current research interests include the development of tractable and scalable Bayesian inference techniques for Gaussian processes and Deep/Conv Nets with applications in life and environmental sciences. Bio: Ba-Hien Tran is currently a PhD student within the Data Science department of EURECOM, under the supervision of Professor Maurizio Filippone. His research focuses on Accelerating Inference for Deep Probabilistic Modeling. In 2016, he received a Bachelor of Science degree with honors in Computer Science from Vietnam National University, HCMC. His thesis investigated Deep Learning approaches for data-driven image captioning. In 2020, he received a Master of Science in Engineering degree in Data Science from Télécom Paris. His thesis focused on Bayesian Inference for Deep Neural Networks. |

|

Ties van Rozendaal Senior Machine Learning Researcher Qualcomm AI Research YouTube Stream: https://youtu.be/LQu-kwpfFg4 Neural data compression has been shown to outperform classical methods in terms of rate-distortion performance, with results still improving rapidly. These models are fitted to a training dataset and cannot be expected to optimally compress test data in general due to limitations on model capacity, distribution shifts, and imperfect optimization. If the test-time data distribution is known and has relatively low entropy, the model can easily be finetuned or adapted to this distribution. Instance-adaptive methods take this approach to the extreme, adapting the model to a single test instance, and signaling the updated model along in the bitstream. In this talk, we will show the potential of different types of instance-adaptive methods and discuss the tradeoffs that these methods pose.

Bio: Ties is a senior machine learning researcher at Qualcomm AI Research. He obtained his masters’s degree at the University of Amsterdam with a thesis on personalizing automatic speech recognition systems using unsupervised methods. At Qualcomm AI research he has been working on neural compression, with a focus on using generative models to compress image and video data. His research includes work on semantic compression and constrained optimization as well as instance-adaptive and neural-implicit compression. |

|

Robin Jia Assistant Professor of Computer Science University of Southern California YouTube Stream: https://youtu.be/ALqqlgbzAB0 Natural language processing (NLP) models have achieved impressive accuracies on in-distribution benchmarks, but they are unreliable in out-of-distribution (OOD) settings. In this talk, I will give an exclusive preview of my group’s ongoing work on evaluating and improving model performance in OOD settings. First, I will propose likelihood splits, a general-purpose way to create challenging non-i.i.d. benchmarks by measuring generalization to the tail of the data distribution, as identified by a language model. Second, I will describe the advantages of neurosymbolic approaches over end-to-end pretrained models for OOD generalization in visual question answering; these results highlight the importance of measuring OOD generalization when comparing modeling approaches. Finally, I will show how synthesized examples can improve open-set recognition, the task of abstaining on OOD examples that come from classes never seen at training time.

Bio: Robin Jia is an Assistant Professor of Computer Science at the University of Southern California. He received his Ph.D. in Computer Science from Stanford University, where he was advised by Percy Liang. He has also spent time as a visiting researcher at Facebook AI Research, working with Luke Zettlemoyer and Douwe Kiela. He is interested broadly in natural language processing and machine learning, with a particular focus on building NLP systems that are robust to distribution shift. Robin’s work has received best paper awards at ACL and EMNLP. |

|

May 23 |

No Seminar

|

May 30 |

No Seminar (Memorial Day Holiday)

|

Bobak Pezeshki PhD Student, Department of Computer Science University of California, Irvine YouTube Stream: https://youtu.be/Yl_aCTieVqc Computational protein design (CPD) is the task of creating new proteins to fulfill a desired function. In this talk, I will share work recently accepted at UAI 2022 based on a new formulation of CPD as a graphical model designed for optimizing subunit binding affinity. These new methods showed promising results when compared with state-of-the-art algorithm BBK* that is part of a long-time developed software package dedicated to CPD. In the talk, I will first describe CPD in general and for optimizing a quantity called K* (which approximates binding affinity). I will relate this to the well known task of MMAP for which many powerful algorithms have been recently developed and from which our methods are inspired. Next I will give a preview of the promising results of our new framework. I will then go on to describe the framework, presenting the formulation of the problem as a graphical model for K* optimization and introducing a weighted mini-bucket heuristic for bounding K* and guiding search. Finally, I will share our algorithm AOBB-K* and modifications that can enhance it, describing some of the empirical benefits and limitations of our scheme. To conclude, I will outline some future directions for advancing the use of this framework.

Bio: Bobak Pezeshki is a fifth year PhD student of Computer Science at the University of California, Irvine, under advisement of Professor Rina Dechter. His research focus is in automated reasoning over graphical models with focus in Abstraction Sampling and applying automated reasoning over graphical models to computational protein design. He completed his undergraduate studies at UC Berkeley majoring in Molecular and Cell Biology (with an emphasis in Biochemistry) and Integrative Biology. Before pursuing his PhD at UCI, he was involved in protein biochemistry research at the Stroud Lab, UCSF, and at Novartis Vaccines and Diagnostics. |

CML faculty elected as AAAS Fellows

StandardTwo faculty affiliated with the UCI Center for Machine Learning and Intelligent Systems have been elected as 2021 AAAS Fellows, joining 190 other AAAS Fellows at UC Irvine. Rina Dechter, Distinguished Professor of Computer Science and Associate Dean for Research in the Donald Bren School of Information & Computer Sciences, was elected for contributions to computational aspects of automated reasoning and knowledge representation, including search, constraint processing, and probabilistic reasoning, and for service to the computing community. Padhraic Smyth, Chancellor’s Professor of Computer Science and Associate Director of the UCI Center for Machine Learning, was elected for distinguished contributions to the field of machine learning, particularly the development of statistical foundations and methodologies. Congratulations to them both!

Winter 2022

StandardLive Stream for all Winter 2022 CML Seminars

January 3 |

No Seminar

|

Roy Fox Assistant Professor Department of Computer Science University of California, Irvine YouTube Stream: https://youtu.be/ImvsK5CFp0w Ensemble methods for reinforcement learning have gained attention in recent years, due to their ability to represent model uncertainty and use it to guide exploration and to reduce value estimation bias. We present MeanQ, a very simple ensemble method with improved performance, and show how it reduces estimation variance enough to operate without a stabilizing target network. Curiously, MeanQ is theoretically *almost* equivalent to a non-ensemble state-of-the-art method that it significantly outperforms, raising questions about the interaction between uncertainty estimation, representation, and resampling.

In adversarial environments, where a second agent attempts to minimize the first’s rewards, double-oracle (DO) methods grow a population of policies for both agents by iteratively adding the best response to the current population. DO algorithms are guaranteed to converge when they exhaust all policies, but are only effective when they find a small population sufficient to induce a good agent. We present XDO, a DO algorithm that exploits the game’s sequential structure to exponentially reduce the worst-case population size. Curiously, the small population size that XDO needs to find good agents more than compensates for its increased difficulty to iterate with a given population size. Bio: Roy Fox is an Assistant Professor and director of the Intelligent Dynamics Lab at the Department of Computer Science at UCI. He was previously a postdoc in UC Berkeley’s BAIR, RISELab, and AUTOLAB, where he developed algorithms and systems that interact with humans to learn structured control policies for robotics and program synthesis. His research interests include theory and applications of reinforcement learning, algorithmic game theory, information theory, and robotics. His current research focuses on structure, exploration, and optimization in deep reinforcement learning and imitation learning of virtual and physical agents and multi-agent systems. |

|

January 17 |

No Seminar (Martin Luther King, Jr. Day)

|

Ransalu Senanayake Postdoctoral Scholar Department of Computer Science Stanford University YouTube Stream: https://youtu.be/3yR8BqBElXw Autonomous agents such as self-driving cars have already gained the capability to perform individual tasks such as object detection and lane following, especially in simple, static environments. While advancing robots towards full autonomy, it is important to minimize deleterious effects on humans and infrastructure to ensure the trustworthiness of such systems. However, for robots to safely operate in the real world, it is vital for them to quantify the multimodal aleatoric and epistemic uncertainty around them and use that uncertainty for decision-making. In this talk, I will talk about how we can leverage tools from approximate Bayesian inference, kernel methods, and deep neural networks to develop interpretable autonomous systems for high-stakes applications.

Bio: Ransalu Senanayake is a postdoctoral scholar in the Statistical Machine Learning Group at the Department of Computer Science, Stanford University. He focuses on making downstream applications of machine learning trustworthy by quantifying uncertainty and explaining the decisions of such systems. Currently, he works with Prof. Emily Fox and Prof. Carlos Guestrin. He also worked on decision-making under uncertainty with Prof. Mykel Kochenderfer. Prior to joining Stanford, Ransalu obtained a PhD in Computer Science from the University of Sydney, Australia, and an MPhil in Industrial Engineering and Decision Analytics from the Hong Kong University of Science and Technology, Hong Kong. |

|

Dylan Slack PhD Student Department of Computer Science University of California, Irvine YouTube Stream: https://youtu.be/71RJvjPhk3U For domain experts to adopt machine learning (ML) models in high-stakes settings such as health care and law, they must understand and trust model predictions. As a result, researchers have proposed numerous ways to explain the predictions of complex ML models. However, these approaches suffer from several critical drawbacks, such as vulnerability to adversarial attacks, instability, inconsistency, and lack of guidance about accuracy and correctness. For practitioners to safely use explanations in the real world, it is vital to properly characterize the limitations of current techniques and develop improved explainability methods. This talk will describe the shortcomings of explanations and introduce current research demonstrating how they are vulnerable to adversarial attacks. I will also discuss promising solutions and present recent work on explanations that leverage uncertainty estimates to overcome several critical explanation shortcomings.

Bio: Dylan Slack is a Ph.D. candidate at UC Irvine advised by Sameer Singh and Hima Lakkaraju and associated with UCI NLP, CREATE, and the HPI Research Center. His research focuses on developing techniques that help researchers and practitioners build more robust, reliable, and trustworthy machine learning models. In the past, he has held research internships at GoogleAI and Amazon AWS and was previously an undergraduate at Haverford College advised by Sorelle Friedler where he researched fairness in machine learning. |

|

Maja Rudolph Senior Research Scientist Bosch Center for AI YouTube Stream: https://youtu.be/9fRw74WhRdE Recurrent neural networks (RNNs) are a popular choice for modeling sequential data. Standard RNNs assume constant time-intervals between observations. However, in many datasets (e.g. medical records) observation times are irregular and can carry important information. To address this challenge, we propose continuous recurrent units (CRUs) – a neural architecture that can naturally handle irregular intervals between observations. The CRU assumes a hidden state which evolves according to a linear stochastic differential equation and is integrated into an encoder-decoder framework. The recursive computations of the CRU can be derived using the continuous-discrete Kalman filter and are in closed form. The resulting recurrent architecture has temporal continuity between hidden states and a gating mechanism that can optimally integrate noisy observations. We derive an efficient parametrization scheme for the CRU that leads to a fast implementation (f-CRU). We empirically study the CRU on a number of challenging datasets and find that it can interpolate irregular time series better than methods based on neural ordinary differential equations.

Bio: Maja Rudolph is a Senior Research Scientist at the Bosch Center for AI where she works on machine learning research questions derived from engineering problems: for example, how to model driving behavior, how to forecast the operating conditions of a device, or how to find anomalies in the sensor data of an assembly line. In 2018, Maja completed her Ph.D. in Computer Science at Columbia University, advised by David Blei. She holds a MS in Electrical Engineering from Columbia University and a BS in Mathematics from MIT. |

|

Energy-based models (EBMs) are an appealing class of probabilistic models, which can be viewed as generative versions of discriminators, yet can be learned from unlabeled data. Despite a number of desirable properties, two challenges remain for training EBMs on high-dimensional datasets. First, learning EBMs by maximum likelihood requires Markov Chain Monte Carlo (MCMC) to generate samples from the model, which can be extremely expensive. Second, the energy potentials learned with non-convergent MCMC can be highly biased, making it difficult to evaluate the learned energy potentials or apply the learned models to downstream tasks. In this talk, I will present two algorithms to tackle the challenges of training EBMs. (1) Diffusion Recovery Likelihood, where we tractably learn and sample from a sequence of EBMs trained on increasingly noisy versions of a dataset. Each EBM is trained with recovery likelihood, which maximizes the conditional probability of the data at a certain noise level given their noisy versions at a higher noise level. (2) Flow Contrastive Estimation, where we jointly estimate an EBM and a flow-based model, in which the two models are iteratively updated based on a shared adversarial value function. We demonstrate that EBMs can be trained with a small budget of MCMC or completely without MCMC. The learned energy potentials are faithful and can be applied to likelihood evaluation and downstream tasks, such as feature learning and semi-supervised learning. Bio: Ruiqi Gao is a research scientist at Google, Brain team. Her research interests are in statistical modeling and learning, with a focus on generative models and representation learning. She received her Ph.D. degree in statistics from the University of California, Los Angeles (UCLA) in 2021 advised by Song-Chun Zhu and Ying Nian Wu. Prior to that, she received her bachelor’s degree from Peking University. Her recent research themes include scalable training algorithms of deep generative models, variational inference, and representational models with implications in neuroscience. |

|

February 21 |

No Seminar (Presidents’ Day)

|

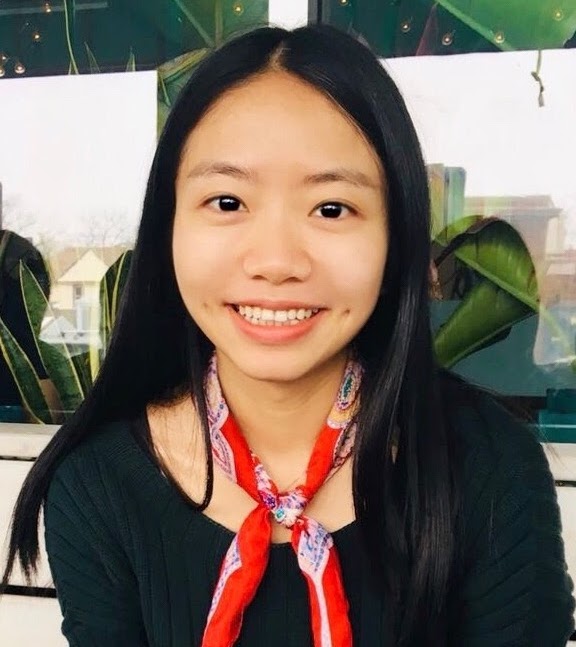

Large language models are commonly used in different paradigms of natural language processing and machine learning, and are known for their efficiency as well as their overall lack of interpretability. Their data driven approach for emulating human language often results in human biases being encoded and even amplified, potentially leading to cyclic propagation of representational and allocational harm. We discuss in this talk some aspects of detecting, evaluating, and mitigating biases and associated harms in a holistic, inclusive, and culturally-aware manner. In particular, we discuss the disparate impact on society of common language tools that are not inclusive of all gender identities.

Bio: Sunipa Dev is a Research Scientist on the Ethical AI team at Google AI. Previously, she was an NSF Computing Innovation Fellow at UCLA, before which she completed her PhD at the University of Utah. Her ongoing research focuses on various facets of fairness and interpretability in NLP, including robust measurements of bias, cross-cultural understanding of concepts in NLP, and inclusive language representations. |

|

March 7 Zoom 1 pm |

Mukund Sundararajan Principal Research Scientist YouTube Stream unavailable, please join via Zoom Predicting cancer from XRays seemed great Until we discovered the true reason. The model, in its glory, did fixate On radiologist markings – treason! We found the issue with attribution: By blaming pixels for the prediction (1,2,3,4,5,6). A complement’ry way to attribute, is to pay training data, a tribute (1). If you are int’rested in FTC, counterfactual theory, SGD Or Shapley values and fine kernel tricks, Please come attend, unless you have conflicts Should you build deep models down the road, Use attributions. Takes ten lines of code! Bio: There once was an RS called MS, The models he studies are a mess, A director at Google. Accurate and frugal, Explanations are what he likes best. |

March 14 |

No Seminar (Finals Week)

|

Winter 2021

StandardLive Stream for all Winter 2021 CML Seminars

Jan. 4 |

No Seminar

|

Florian Wenzel Postdoctoral Researcher Google Brain Berlin YouTube Stream: https://youtu.be/9n8_5tjt_Lw Deep learning models are bad at detecting their failure. They tend to make over-confident mistakes, especially, under distribution shift. Making deep learning more reliable is important in safety-critical applications including health care, self-driving cars, and recommender systems. We discuss two approaches to reliable deep learning. First, we will focus on Bayesian neural networks that come with many promises to improved uncertainty estimation. However, why are they rarely used in industrial practice? In this talk, we will cast doubt on the current understanding of Bayes posteriors in deep networks. We show that Bayesian neural networks can be improved significantly through the use of a “cold posterior” that overcounts evidence and hence sharply deviates from the Bayesian paradigm. We will discuss several hypotheses that could explain cold posteriors. In the second part, we will discuss a classical approach to more robust predictions: ensembles. Deep ensembles combine the predictions of models trained from different initializations. We will show that the diversity of predictions can be improved by considering models with different hyperparameters. Finally, we present an efficient method that leverages hyperparameter diversity within a single model.

Bio: Florian Wenzel is a machine learning researcher who is currently on the job market. His research has focused on probabilistic deep learning, uncertainty estimation, and scalable inference methods. From October 2019 to October 2020 he was a postdoctoral researcher at Google Brain. He received his PhD from Humboldt University in Berlin and worked with Marius Kloft, Stephan Mandt, and Manfred Opper. |

|

Jan. 18 |

No Seminar (Martin Luther King, Jr. Holiday)

|

Yezhou Yang Assistant Professor School of Computing, Informatics, and Decision Systems Engineering Arizona State University YouTube Stream: https://youtu.be/IcSUBZraB3s The goal of Computer Vision, as coined by Marr, is to develop algorithms to answer What are Where at When from visual appearance. The speaker, among others, recognizes the importance of studying underlying entities and relations beyond visual appearance, following an Active Perception paradigm. This talk will present the speaker’s efforts over the last decade, ranging from 1) reasoning beyond appearance for visual question answering, image understanding and video captioning tasks, through 2) temporal knowledge distillation with incremental knowledge transfer, till 3) their roles in a Robotic visual learning framework via a Robotic Indoor Object Search task. The talk will also feature the Active Perception Group (APG)’s ongoing projects (NSF RI, NRI and CPS, DARPA KAIROS, and Arizona IAM) addressing emerging challenges of the nation in autonomous driving, AI security and healthcare domains, at the ASU School of Computing, Informatics, and Decision Systems Engineering (CIDSE).

Bio: Yezhou Yang is an Assistant Professor at School of Computing, Informatics, and Decision Systems Engineering, Arizona State University. He is directing the ASU Active Perception Group. His primary interests lie in Cognitive Robotics, Computer Vision, and Robot Vision, especially exploring visual primitives in human action understanding from visual input, grounding them by natural language as well as high-level reasoning over the primitives for intelligent robots. Before joining ASU, Dr. Yang was a Postdoctoral Research Associate at the Computer Vision Lab and the Perception and Robotics Lab, with the University of Maryland Institute for Advanced Computer Studies. He is a recipient of Qualcomm Innovation Fellowship 2011, the NSF CAREER award 2018 and the Amazon AWS Machine Learning Research Award 2019. He receives his Ph.D. from University of Maryland at College Park, and B.E. from Zhejiang University, China. |

|

Joe Marino PhD Student Computation and Neural Systems California Institute of Technology YouTube Stream: https://youtu.be/iVz6uwD7i6A Unsupervised machine learning has recently dramatically improved our ability to model and extract structure from data. One such approach is deep latent variable models, which includes variational autoencoders (VAEs) [Kingma & Welling, 2014; Rezende et al., 2014]. These models can be traced back to the Helmholtz machine [Dayan et al., 1995], which, in turn, was inspired by ideas from theoretical neuroscience [Mumford, 1992]. In the intervening years, neuroscientists have further developed these ideas into a popular theory: predictive coding [Rao & Ballard, 1999; Friston, 2005]. Yet, the machine learning community remains largely unaware of these connections. In this talk, I discuss the links between modern deep latent variable models and predictive coding, yielding several striking implications for the correspondences between machine learning and neuroscience. This motivates a more nuanced view in connecting these fields, including the search for backpropagation in the brain.

Bio: Joe Marino is a PhD candidate in the Computation & Neural Systems program at Caltech, advised by Yisong Yue. His work focuses on improving probabilistic models and inference techniques, using neuroscience-inspired ideas, within the areas of generative modeling and reinforcement learning. |

|

Influence diagrams (IDs) extend Bayesian networks with decision variables and utility functions to model the interaction between an agent and a system to capture the preferences. The standard task in IDs is to compute the maximum expected utility (MEU) over the influence diagram and optimal policies. However, it is the most challenging task in graphical models. Therefore, computing upper bounds on the MEU is desirable because upper bounds can facilitate anytime-solutions by acting as heuristics to guide search or sampling-based methods. In this talk, I will present bounding schemes for solving IDs. The first approach builds on top of the tree decomposition scheme in probabilistic graphical models and extends variational decomposition bounds in marginal MAP. The second approach is a new tree decomposition method called submodel tree decomposition. The empirical evaluation results show that presented bounding schemes generate upper bounds that are orders of magnitude tighter than previous methods. Finally, I will conclude the talk with future directions.

Bio: Junkyu Lee received his Ph.D. from the CS department at UC Irvine, where Rina Dechter supervised him. Currently, he is a resident at the IBM Research AI planning group. His research focuses on graphical model inference and heuristic search for sequential decision making under uncertainty. He is also broadly interested in related areas such as planning and reinforcement learning. |

|

Feb. 15 |

No Seminar (Presidents’ Holiday)

|

Feb. 22 |

No Seminar

|

Robert Logan PhD Student Department of Computer Science University of California, Irvine YouTube Stream: https://youtu.be/Mim1pmEn1UU Recent progress in natural language processing (NLP) has been predominantly driven by the advent of large neural language models (e.g., GPT-2 and BERT) that are “pretrained” using a self-supervised learning objective on billions of tokens of text before being “finetuned” (i.e., transferred) to downstream tasks. The exceptional success of these models has motivated many NLP researchers to study what exactly these models are learning during pretraining that causes them to be more successful than their non-self-supervised counterparts. In this talk, we will describe the technique of prompting, an approach that answers this question by reformulating tasks as fill-in-the-blanks questions. We will begin by showing how prompts can be used to measure the amount of factual, linguistic, and task-specific knowledge contained in language models. We will then introduce an approach for automatically constructing prompts based on gradient-guided search that provides a scalable alternative to manually writing prompts by hand. Lastly, we will cover our ongoing work investigating whether prompting can be used as a replacement for finetuning of language models, describing some early results that demonstrate that prompting can indeed be more effective in few-shot learning scenarios while being substantially more parameter efficient.

Bio: Robert L. Logan IV is a 4th year PhD Candidate at UC Irvine, co-advised by Sameer Singh and Padhraic Smyth. His research focuses on leveraging external knowledge sources to measure and improve NLP models’ ability to reason with factual and common sense knowledge. He was selected as a Noyce Fellow and has been awarded the 2020 Rose Hills Foundation Scholarship. Robert received his B.A. in mathematics at the University of California, Santa Cruz, and has held research positions at Google and Diffbot. |

|

March 8 |

No Seminar

|

March 15 |

Finals Week

|

Fall 2020

StandardLive Stream for all Fall 2020 CML Seminars

Oct 5 |

No Seminar

|

Forest Agostinelli Assistant Professor Computer Science and Engineering University of South Carolina YouTube Stream: https://youtu.be/shwYW9yEAIQ Combination puzzles, such as the Rubik’s cube, pose unique challenges for artificial intelligence. Furthermore, solutions to such puzzles are directly linked to problems in the natural sciences. In this talk, I will present DeepCubeA, a deep reinforcement learning and search algorithm that can solve the Rubik’s cube, and six other puzzles, without domain specific knowledge. Next, I will discuss how solving combination puzzles opens up new possibilities for solving problems in the natural sciences. Finally, I will show how problems we encounter in the natural sciences motivate future research directions in areas such as theorem proving and education. A demonstration of our work can be seen at http://deepcube.igb.uci.edu/.

Bio: Forest Agostinelli is an assistant professor at the University of South Carolina. He received his B.S. from the Ohio State University, his M.S. from the University of Michigan, and his Ph.D. from UC, Irvine under Professor Pierre Baldi. His research interests include deep learning, reinforcement learning, search, bioinformatics, neuroscience, and chemistry. |

|

Stephan Mandt Assistant Professor Dept. of Computer Science University of California, Irvine YouTube Stream: https://youtu.be/Z8juQKrCkmk Neural image compression algorithms have recently outperformed their classical counterparts in rate-distortion performance and show great potential to also revolutionize video coding. In this talk, I will show how innovations from Bayesian machine learning and generative modeling can lead to dramatic performance improvements in compression. In particular, I will explain how sequential variational autoencoders can be converted into video codecs, how deep latent variable models can be compressed in post-processing with variable bitrates, and how iterative amortized inference can be used to achieve the world record in image compression performance.

Bio: Stephan Mandt is an Assistant Professor of Computer Science at the University of California, Irvine. From 2016 until 2018, he was a Senior Researcher and Head of the statistical machine learning group at Disney Research, first in Pittsburgh and later in Los Angeles. He held previous postdoctoral positions at Columbia University and Princeton University. Stephan holds a Ph.D. in Theoretical Physics from the University of Cologne. He is a Fellow of the German National Merit Foundation, a Kavli Fellow of the U.S. National Academy of Sciences, and was a visiting researcher at Google Brain. Stephan regularly serves as an Area Chair for NeurIPS, ICML, AAAI, and ICLR, and is a member of the Editorial Board of JMLR. His research is currently supported by NSF, DARPA, Intel, and Qualcomm. |

|

Christoph Lippert Professor Hasso Plattner Institute University of Potsdam YouTube Stream: https://youtu.be/zElgAKf4AhE At the Chair of Digital Health & Machine Learning, we are developing methods for the statistical analysis of large biomedical data. In particular imaging provides a powerful means for measuring phenotypic information at scale. While images are abundantly available in large repositories such as the UK Biobank, the analysis of imaging data poses new challenges for statistical methods development. In this talk, I will give an overview over some of our current efforts in using deep representation learning as a non-parametric way to model imaging phenotypes and for associating images to the genome.

References: Kirchler, M., Khorasani, S., Kloft, M., & Lippert, C. (2020, June). Two-sample testing using deep learning. In International Conference on Artificial Intelligence and Statistics (pp. 1387-1398). PMLR. Kirchler, M., Konigroski, S., Schurmann, C., Norden, M., Meltendorf, C., Kloft, M., Lippert, C. transferGWAS: GWAS of images using deep transfer learning. Manuscript in preparation. Bio: Lippert studied bioinformatics from 2001–2008 in Munich and went on to earn his doctorate at the Max Planck Institutes for Intelligent Systems and for Developmental Biology in Tübingen in machine learning bioinformatics, with an emphasis on methods for genome-associated studies. In 2012, he accepted a Researcher position at Microsoft Research in Los Angeles and subsequently carried out work at Human Longevity, Inc. in Mountain View. In 2017, Lippert returned to Germany to head the research group “Statistical Genomics” at the Max Delbrück Center for Molecular Medicine in Berlin. In 2018, Lippert has been appointed Full Professor of “Digital Health & Machine Learning” in the joint Digital Engineering Faculty of the Hasso Plattner Institute and the University of Potsdam. |

|

Cory Scott PhD Student Dept. of Computer Science University of California, Irvine YouTube Stream: https://youtu.be/CpGfCA92rMw Microtubules are a primary constituent of the dynamic cytoskeleton in living cells, involved in many cellular processes whose study would benefit from scalable dynamic computational models. We define a novel machine learning model which aggregates information across multiple spatial scales to predict energy potentials measured from a simulation of a section of microtubule. Using projection operators which optimize an objective function related to the diffusion kernel of a graph, we sum information from local neighborhoods. This process is repeated recursively until the coarsest scale, and all scales are separately used as the input to a Graph Convolutional Network, forming our novel architecture: the Graph Prolongation Convolutional Network (GPCN). The GPCN outputs a prediction for each spatial scale, and these are combined using the inverse of the optimized projections. This fine-to-coarse mapping, and its inverse, create a model which is able to learn to predict energetic potentials more efficiently than other GCN ensembles which do not leverage multiscale information. We also compare the effect of training this ensemble in a coarse-to-fine fashion, and find that schedules adapted from the Algebraic Multigrid (AMG) literature further increase this efficiency. Since forces are derivatives of energies, we discuss the implications of this type of model for machine learning of multiscale molecular dynamics.

Reference: C.B. Scott and Eric Mjolsness. “Graph Prolongation Convolutional Networks: Explicitly Multiscale Machine Learning on Graphs with Applications to Modeling of Cytoskeleton”. In: Machine Learning: Science and Technology (2020). DOI: https://iopscience.iop.org/article/10.1088/2632-2153/abb6d2 |

|

Lukas Ruff PhD Student Electrical Engineering and Computer Science TU Berlin YouTube Stream: https://youtu.be/Uncc5y7g8Is Anomaly detection is the problem of identifying unusual observations in data. This problem is usually unsupervised and occurs in numerous applications such as industrial fault and damage detection, fraud detection in finance and insurance, intrusion detection in cybersecurity, scientific discovery, or medical diagnosis and disease detection. Many of these applications involve complex data such as images, text, graphs, or biological sequences, that is continually growing in size. This has sparked a great interest in developing deep learning approaches to anomaly detection.

In this talk, my aim is to provide a systematic and unifying overview of deep anomaly detection methods. We will discuss methods based on reconstruction, generative modeling, and one-class classification, where we identify common underlying principles and draw connections between traditional ‘shallow’ and novel deep methods. Furthermore, we will cover recent developments that include weakly and self-supervised approaches as well as techniques for explaining models that enable to reveal ‘Clever Hans’ detectors. Finally, I will conclude the talk by highlighting some open challenges and potential paths for future research. Bio: Lukas Ruff is a third year PhD student in the Machine Learning Group headed by Klaus-Robert Müller at TU Berlin. His research covers robust and trustworthy machine learning, with a specific focus on deep anomaly detection. Lukas received a B.Sc. degree in Mathematical Finance from the University of Konstanz in 2015 and a joint M.Sc. degree in Statistics from HU, TU and FU Berlin in 2017. |

|

Karem Sakallah Professor Electrical Engineering and Computer Science University of Michigan YouTube Stream: https://youtu.be/5A5dTRo50EQ Accidental research is when you’re an expert in some domain and seek to solve problem A in that domain. You soon discover that to solve A you need to also solve B which, however, comes from a domain in which you have little, or even no, expertise. You, thus, explore existing solutions to B but are disappointed to find that they just aren’t up to the task of solving A. Your options at this point are a) to abandon this futile project, or b) to try and find a solution to B that will help you solve A. While this might seem like a fool’s errand, you have the advantage over B experts of being unencumbered by their experience. You are a novice who does not, yet, appreciate the complexity of B, but are able to explore it from a fresh perspective. You also bring along expertise from your own domain to connect what you know with what you hope to learn. If you’re lucky, you may succeed in finding a solution to B that helps you solve A.

I will relate two cases in which this scenario played out: developing the GRASP conflict-driven clause-learning SAT solver in the context of performing timing analysis of very large scale integrated circuits, and developing the saucy graph automorphism program to find and break symmetries in large SAT problems. Ironically, in both cases solving problem B (GRASP, saucy) turned out to be much more impactful than solving problem A (timing analysis, breaking symmetries.) Without the trigger of problem A, however, neither GRASP nor saucy would have been conceived. Bio: Karem A. Sakallah is a Professor of Electrical Engineering and Computer Science at the University of Michigan. He received the B.E. degree in electrical engineering from the American University of Beirut and the M.S. and Ph.D. degrees in electrical and computer engineering from Carnegie Mellon University. Prior to joining the University of Michigan, he headed the Analysis and Simulation Advanced Development Team at Digital Equipment Corporation. Besides his academic duties, he has served in a variety of professional roles including the establishment of a computing research institute in Qatar for which he took a leave to serve a term of three years as the Chief Scientist. His current research is focused on automating the formal verification of hardware, software, and distributed protocols. He is a fellow of the IEEE and the ACM and a co-recipient of the prestigious Computer-Aided Verification Award for “Fundamental contributions to the development of high-performance Boolean satisfiability solvers.” |

|

Ioannis Panageas Assistant Professor Dept. of Computer Science University of California, Irvine YouTube Stream: https://youtu.be/4cepfWDiL3A In this talk we will give an overview of some results on the limiting behavior of first-order methods. In particular we will show that typical instantiations of first-order methods like gradient descent, coordinate descent, etc. avoid saddle points for almost all initializations. Moreover, we will provide applications of these results on Non-negative Matrix Factorization. The takeaway message is that such algorithms can be studied from a dynamical systems perspective in which appropriate instantiations of the Stable Manifold Theorem allow for a global stability analysis.

Bio: Ioannis is an Assistant Professor of Computer Science at UCI. He is interested in the theory of computation, machine learning and its interface with non-convex optimization, dynamical systems, probability and statistics. Before joining UCI, he was an Assistant Professor at Singapore University of Technology and Design. Prior to that he was a MIT postdoctoral fellow working with Constantinos Daskalakis. He received his PhD in Algorithms, Combinatorics and Optimization from Georgia Tech in 2016, a Diploma in EECS from National Technical University of Athens, and a M.Sc. in Mathematics from Georgia Tech. He is the recipient of the 2019 NRF fellowship for AI. |

|

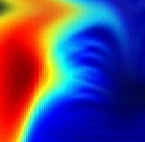

Optical flow provides important motion information about the dynamic world and is of fundamental importance to many tasks. Like other visual inference problems, it is critical to choose the representation to encode both the forward formation process and the prior knowledge of optical flow. In this talk, I will present my work on two different optical flow representations in the past decade. First, I will describe learning Markov random field (MRF) models and defining non-local conditional random field (CRF) models to recover motion boundaries. Second, I will talk about combining domain knowledge of optical flow with convolutional neural networks (CNNs) to develop a compact and effective model and some recent developments.

Bio: Deqing Sun is a senior research scientist at Google working on computer vision and machine learning. He received a Ph.D. degree in Computer Science from Brown University. He is a recipient of the PAMI Young Researcher award in 2020, the Longuet-Higgins prize at CVPR 2020, the best paper honorable mention award at CVPR 2018, and the first prize in the robust optical flow competition at CVPR 2018 and ECCV 2020. He served as an area chair for CVPR/ECCV/BMVC, and co-organized several workshops/tutorials at CVPR, ECCV, and SIGGRAPH. |

|

Dec 7 |

No Seminar (NeurIPS Conference)

|

Dec 14 |

Finals week

|

Sameer Singh Wins Best Paper Award at ACL 2020

StandardWhile researchers know that contemporary natural language processing models aren’t as accurate as their leaderboard performance makes them appear, there hasn’t been a structured way to test them. The best paper award at ACL 2020 went to Prof. Sameer Singh, and collaborators Marco Tulio Ribeiro of Microsoft Research and Tongshuang Wu and Carlos Guestrin at the University of Washington, for their paper Beyond Accuracy: Behavioral Testing of NLP Models with CheckList. Their CheckList framework uses a matrix of general linguistic capabilities and test types to reveal weaknesses in state-of-the-art cloud AI systems.

Read more: https://www.ics.uci.edu/community/news/view_news?id=1817

Upgrading the UCI ML Repository

StandardThe UCI Machine Learning Repository has been a tremendous resource for empirical and methodological research in machine learning for decades. Yet with the growing number of machine learning (ML) research papers, algorithms and datasets, it is becoming increasingly difficult to track the latest performance numbers for a particular dataset, identify suitable datasets for a given task, or replicate the results of an algorithm run on a particular dataset. To address this issue, CML Professors Sameer Singh and Padhraic Smyth along with Philip Papadopoulos, Director of UCI’s Research Cyberinfrastructure Center (RCIC), have planned a “next-generation” upgrade. The trio was recently awarded $1.8 million for their NSF grant, “Machine Learning Democratization via a Linked, Annotated Repository of Datasets.”